I think it would be a useful new feature to add optical character recognition to Spectacle, as more websites make it impossible to select text on them. Something like Text Extractor utility from the MS PowerToys. Admittedly I am unsure how hard it would be to implement, but Spectacle seems to be the most fitting option for something like this.

I share my own OCR script here. It’s using Baidu OCR API and Spectacle to take a regional screenshot. Other APIs should be similar. Both X11 and Wayland are supported.

#!/usr/bin/env bash

client_id="abcdefg"

client_secret="hijklmn"

TMPFILE=$(mktemp)

TMPFILE2=$(mktemp)

trap 'rm -f "$TMPFILE";rm -f "$TMPFILE2"' EXIT

spectacle -r -o $TMPFILE -b -n

# [ "$XDG_SESSION_TYPE" = "x11" ] && xclip -selection clipboard -t image/png -o 2>/dev/null > $TMPFILE

# [ "$XDG_SESSION_TYPE" = "wayland" ] && wl-paste -n -t image/png 2>/dev/null > $TMPFILE

if [ -s "$TMPFILE" ]; then

base64 -w0 ${TMPFILE} > ${TMPFILE2}

auth_host="https://aip.baidubce.com/oauth/2.0/token?grant_type=client_credentials&client_id="${client_id}"&client_secret="${client_secret}

json_response=$(curl -s --retry-delay 1 --connect-timeout 10 --max-time 10 $auth_host)

token=$(perl -MCpanel::JSON::XS -ne 'print decode_json($_)->{access_token}' <<< $json_response)

if [ -n "$token" ]; then

ocr_host="https://aip.baidubce.com/rest/2.0/ocr/v1/general_basic?access_token="${token}

result=$(curl -s --retry-delay 1 --connect-timeout 10 --max-time 30 $ocr_host --data-urlencode image@${TMPFILE2} -H 'Content-Type:application/x-www-form-urlencoded')

words_result_num=$(perl -MCpanel::JSON::XS -ne 'print decode_json($_)->{words_result_num}' <<< $result)

[ "$words_result_num" -gt 0 ] && {

[ "$XDG_SESSION_TYPE" = "x11" ] && perl -MCpanel::JSON::XS -ne 'my $arrayref=decode_json($_)->{words_result};foreach my $i(@$arrayref){print $i->{words}}' <<< $result | xclip -selection clipboard

[ "$XDG_SESSION_TYPE" = "wayland" ] && perl -MCpanel::JSON::XS -ne 'my $arrayref=decode_json($_)->{words_result};foreach my $i(@$arrayref){print $i->{words}}' <<< $result | wl-copy

}

kdialog --passivepopup "OCR finished" 7 --title "OCR"

fi

fi

Why the need of an external/online service when you can safely use tesseract locally on your private/official documents?

For example ocrmypdf can do a pretty decent job:

Simply install ocrmypdf with the needed tesseract package language,

For example, on Arch/Manjaro you can install it from AUR:

yay -S ocrmypdf tesseract-data-eng

Then run:

ocrmypdf -l eng -f --sidecar input.pdf output.pdf

And finally open your output.pdf on Okular.

NB: For RTL (e.g Arabic, Hebrew,…) content, you need to open that output.pdf via any Chromium based browser, because copy/paste from OCR text layer will reverse generated characters.

Indeed, any kind of integration that got added to Spectacle would be using Tesseract locally, not an online service.

Of course there are existing OCR solutions, but what is suggested was the integration itself. I don’t want to save the whole screen as pdf then use ocrmypdf. I want to screencap only eg. 2 lines of text and have the text on my clipboard in 1/2 clicks. I think this use case is not unique to me, and Spectacle seems to be the application most suited for a feature like this.

If I wanted to use pdfs i could just print the whole webpages as pdf, no need for spectacle for that.

Maybe I explained it poorly in the post, but I linked the powertoys application as an example of seamless integration.

ocrmypdf is just an example that makes it easy for tesseract to import PDF files, because tesseract doesn’t yet support reading PDFs.

tesseract supports png, jpeg/jpg, tiff… as input formats, and can produce OCR content as text, [searchable PDF/A-xa] pdf, hocr…

Local OCR looks like a toy when they meet Chinese characters, so that’s why a reliable online service is needed.

If that is to be implemented, both “a reliable online” and a local service should be available, I think.

Opening up a large privacy gap for all user groups for the gain of one, albeit large, is not a good idea.

Also, there should be a fat warning before screenshot contents leave the local machine.

The thought of a third party looking at my documents is just frightening. Not knowing who they are is even more frightening.

Local OCR looks like a toy when they meet Chinese characters

I don’t know Chinese, may be the provided trained model needs some additions for more fonts.

I used tesseract heavily on many long scanned documents written in Arabic and French plus English, and the result is quite good, especially with the ability to add OCR text layer on the final PDF.

Hi medin.

Thanks for the info about Tesseract. Works great.

Plan to make a service menu out of it like I do to everything.

Vektor

We can have multiple OCR backends, just like we have multiple Phonon backends.

Maybe we can have a KAI daemon, with multiple backends for OCR, voice recognition, etc.

agree, that OCR should be an integral part of screen capturing. even my phone offers to extract text - when recognized - instead of taking pictures.

so far it takes two steps to get text out of a screenshot for me with:

I also want to give my opinion as a Spectacle and KDE Plasma user. I saw in posts with people talking about tools that do this in Windows and Mac OS, it would be very interesting to have something like this integrated into Spectacle, serving to copy text from somewhere on the screen and convert everything into text to be pasted somewhere. Preferably with an offline engine, for obvious privacy reasons.

Not only Chinese, Russian is too. You can add default (local) and other option (online)

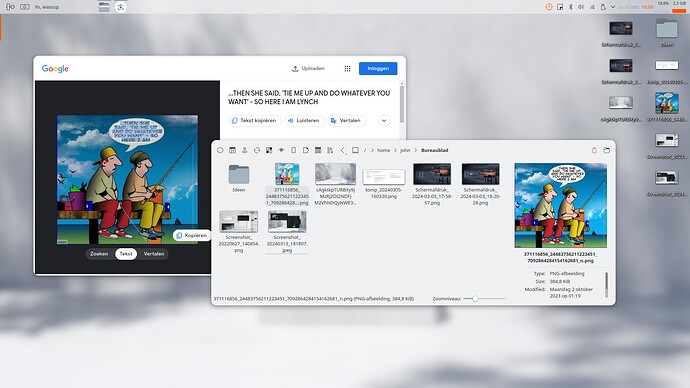

Given up on all the tesseract stuff and simply made me a Lens ssb. Upload or drag 'n drop. Doesn’t require login and you can add/set all the additional stuff you want in the profile.

Text bubbles, road signs…etc etc…, works all the time, everytime.

Here’s a screenshot…of that screenshot. Good luck doing that with tesseract.

Hey Dzon

What is Lens ssb ?

Vektor

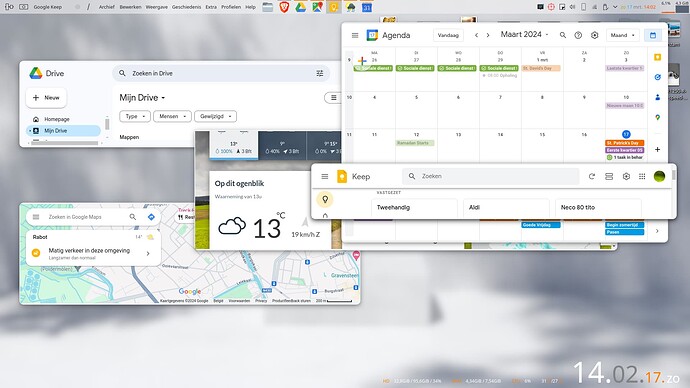

An ssb is short for Site Specific Browser. Back in the days, Peppermint OS was the first to come up with a gui tool to make those. It’s not quite the same as chromium apps ( or firefox apps from times past). They run in their own profile.

You could do similar by creating separate profiles from your browser of choice and run a single specific site on it. The advantages are numerous. Security/privacy comes to mind but you can also use specific addons/extensions which you only need for that specific site. Furthermore, me personally I use a bunch of them as…um…applications an sich so to speak. For example, imho there’s not one single proper note tool available. For my usecase, they all kinda s…k bigtime. So I figured, since I use an android phone I have a google account I might just as well use Keep as a note taker ( as such I have it on my phone as well). So, I do the same with, say, Drive, Maps, Photos… This lens ssb is nothing more than Google Lens in…um…application form. You can say what you want, but if you want proper ocr, you need the more “advanced” stuff. In that regard, Google lens uses that. So that particular one is Google Lens ssb.

If anyone wants a simpler bash script to use spectacle and tesseract:

#!/usr/bin/env bash

TMPFILE=$(mktemp)

trap 'rm -f "$TMPFILE"' EXIT

spectacle -r -o $TMPFILE -b -n 2>/dev/null

if [ -s "$TMPFILE" ]; then

if [ "$XDG_SESSION_TYPE" = "x11" ]; then

tesseract $TMPFILE - | xclip -selection clipboard

fi

if [ "$XDG_SESSION_TYPE" = "wayland" ]; then

tesseract $TMPFILE - | wl-copy

fi

kdialog --passivepopup "OCR finished" 7 --title "OCR"

fi

Requires tesseract and either xclip or wl-clipboard to be installed of course. Set it in KDE as a keyboard shortcut to launch it and you’re ready to go.

I would like to add my support for this idea. It’s such a useful thing to have… particularly if you get a screenshot of someone’s account details that they then expect you to type it down manually, for example >__>

I often found that the conversion quality in tesseract is hit-or-miss when converting PDFs. I’m sure it’s mostly a matter of tweaking the settings however. I’m guessing that with the right default settings, tesseract can be made very reliable for screenshots.

There’s the concern of chinese characters, and that a cloud based system should be used. In my opinion, this goes against the general practice of FOSS apps. Everything in the process should be controlled by you. However, being able to hook to a cloud service as an option might be good to have provided that there’s an open standard for this. It’s also worth noting that tesseract does support chinese characters. Can’t say how well it works though